I fine-tuned the GPT-2 language model (345 million parameters) on tweets from people’s Twitter accounts to create AI versions of them, and then had the bots rewrite real tweets or complete tweets based on various prompts (short sentence starters like “The meaning of life is”). To make it end-to-end (prompt to pic), I add 5 candidates from each bot to a pool of tweets for A/B voting. Once the top tweets are selected I make an image out of them (see below) with OpenCV.

Some of these are funny, some are profound, some are dark in a way that gives me pause. For me, it’s a nice tool that allows me to ask: Based on the tweets of person X, how and what would they say about topic Y? Unfortunately, the networks are better at capturing style than semantics, so it’s not yet useful as a general querying tool.

Here’s a real tweet about tunnels from Elon Musk rewritten by AI versions of Justin Bieber, Kanye West, and Katy Perry:

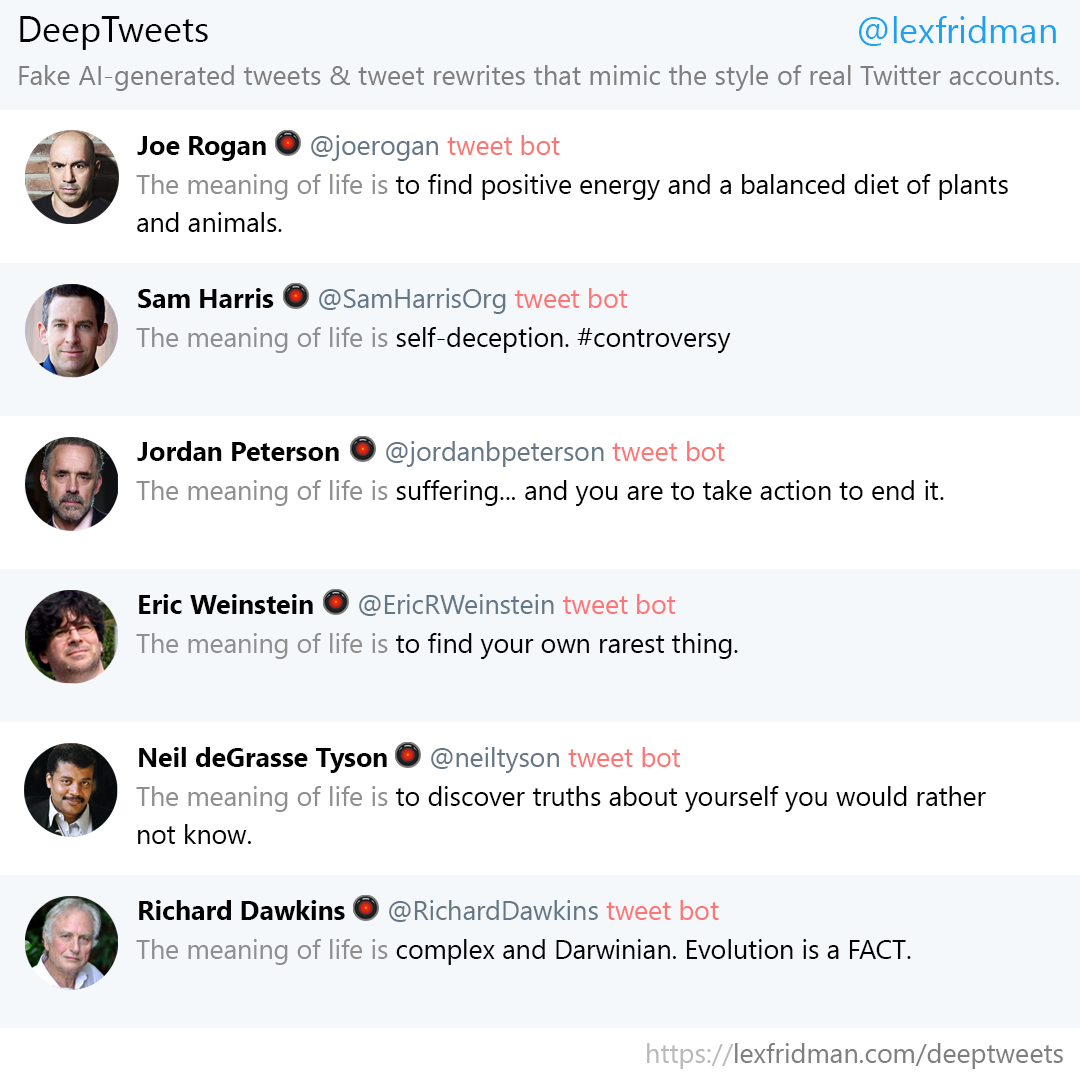

Here’s Joe Rogan, Sam Harris, Jordan Peterson, Eric Weinstein, Neil deGrasse Tyson, and Richard Dawkins completing the prompt “The meaning of life is…”:

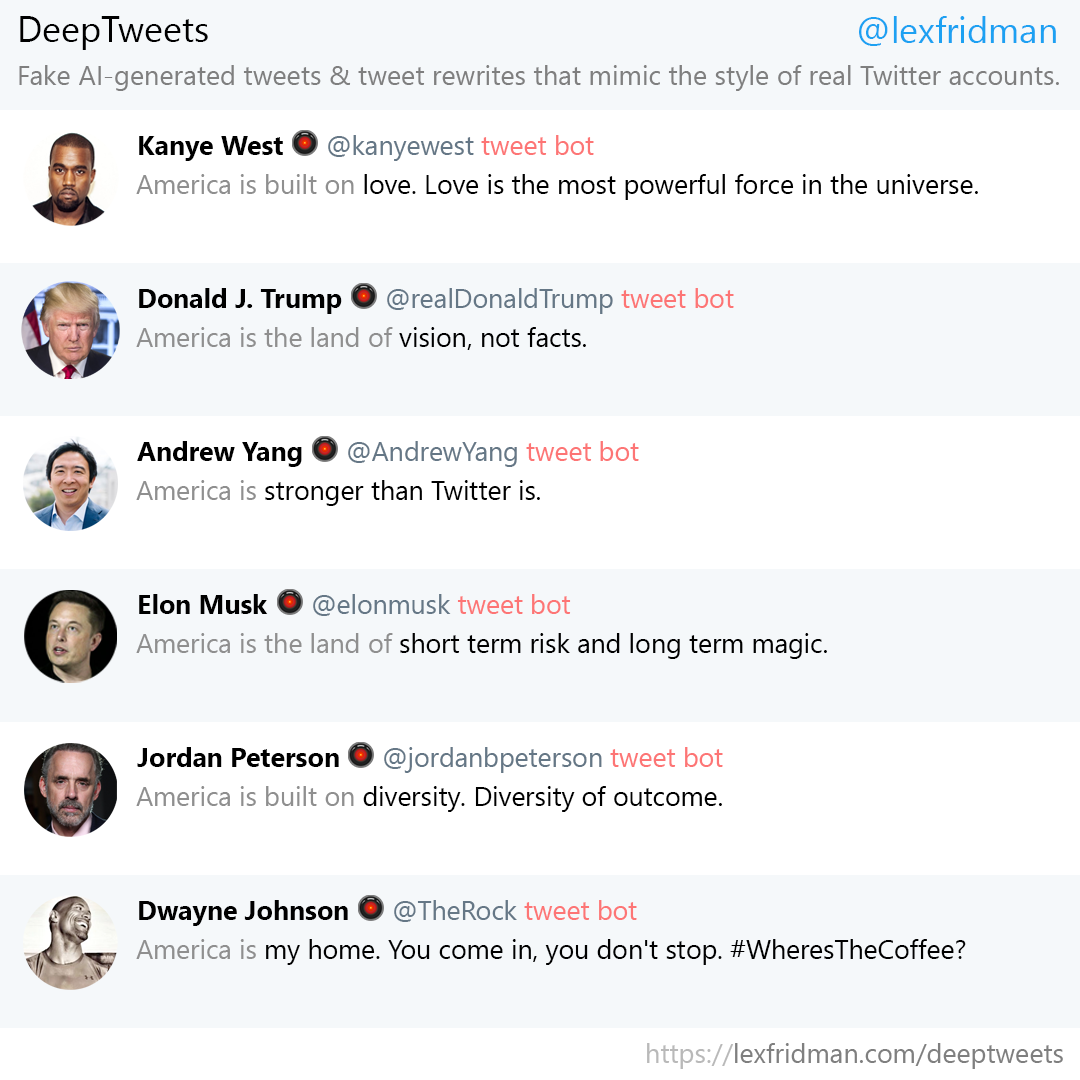

Here’s Kanye West, Donald Trump, Andrew Yang, Elon Musk, Jordan Peterson, and Dwayne “The Rock” Johnson completing the prompts “America is”, “America is the land of”, and “America is built on”:

So far I’ve trained AI versions of the following people (listed below). If you have more suggestions for who/what you would like to see, let me know. I’ll release the models, code, and more tweet bot rewrites and conversations soon. I’m still playing around with what works best for both training & generation. Everything together took ~4 hours of programming time and ~2 weeks neural network training time. List of fine-tuned language models (in alphabetical order) I’ve trained so far:

Barack Obama

Bernie Sanders

Conan O’Brien

Deepak Chopra

Donald Trump

Dwayne The Rock Johnson

Ellen DeGeneres

Elon Musk

Eric Weinstein

Hillary Clinton

Jimmy Fallon

Joe Rogan

Jordan Peterson

Justin Bieber

Kanye West

Katy Perry

Kevin Hart

Lex Fridman

Neil deGrasse Tyson

Richard Dawkins

Ricky Gervais

Sam Harris

If you would like to learn about the basics behind the techniques used for this, you can watch my lectures on deep learning or watch my conversation with Greg Brockman (CTO, OpenAI) about the GPT-2 language model specifically: