It may be several decades before sensors, algorithms, and data collection are sufficiently developed to “solve” the full driving task. Until that time, human beings will remain an integral part of the driving task, monitoring the AI system as it performs anywhere from just over 0% to just under 100% of the driving. We launched the MIT Advanced Vehicle Technology (MIT-AVT) study to understand, through large-scale real-world driving data collection and large-scale deep learning based parsing of that data, how human-AI interaction in driving can be safe and enjoyable. The emphasis is on objective, data-driven analysis.

Update: Please note that the initial version of this paper as it went through the review process referred to this study as the MIT Autonomous Vehicle Technology Study. It was renamed to MIT Advanced Vehicle Technology Study as the scope of our data collection and research efforts broadened to include vehicle technology beyond vehicle autonomy.

The following video introduces the study:

See arXiv paper or the IEEE Access published version for details on methods of data collection, processing, and study design. If you find this work useful in your own research. See its reference on Google Scholar.

Contact: This study (and data collection) is ongoing. If you have interest in this study please visit the current study website and contact if you have any questions. Please note that this is not a way to get in contact with me (Lex). I am no longer actively involved with this work.

We have instrumented Tesla Model S and Model X vehicles, Volvo S90 vehicles, Range Rover Evoque, and Cadillac CT6 vehicles for both long-term (over a year per driver) and medium term (one month per driver) naturalistic driving data collection. Furthermore, we are continually developing new methods for analysis of the massive-scale dataset collected from the instrumented vehicle fleet. The recorded data streams include IMU, GPS, CAN messages, and high-definition video streams of the driver face, the driver cabin, the forward roadway, and the instrument cluster (on select vehicles). The study is on-going and growing:

The backbone of a successful naturalistic driving study is the hardware and low-level software that performs the data collection. In the MIT-AVT study, that role is served by a system named RIDER (Real-time Intelligent Driving Environment Recording system). The following video describes the system:

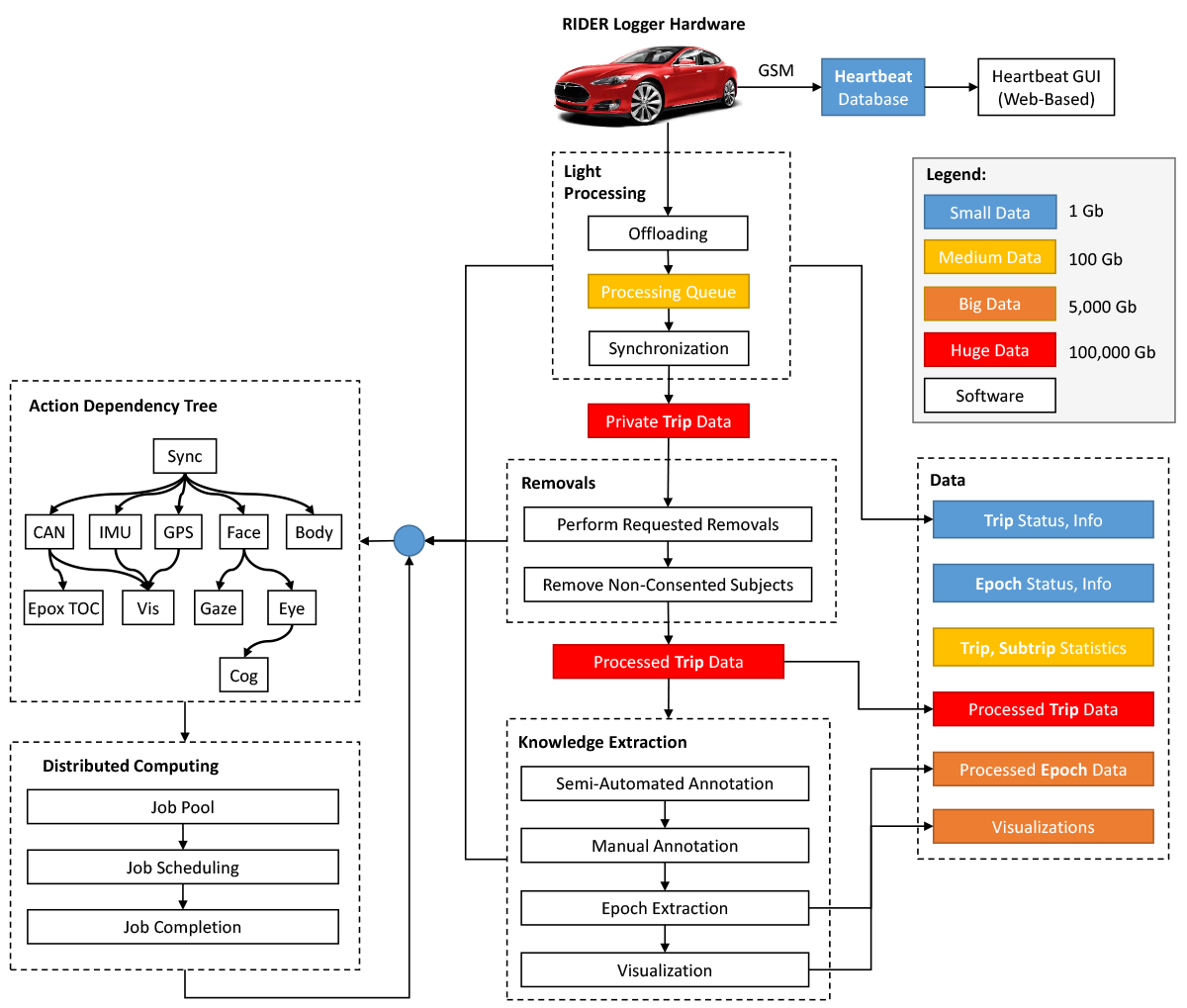

Building on the robust, reliable, and flexible hardware architecture of RIDER is a vast software framework that handles the recording of raw sensory data and takes that data through many steps across thousands of GPU-enabled compute cores to the extracted knowledge and insights about human behavior in the context of autonomous vehicle technologies. The following figure of the pipeline shows the journey from raw timestamped sensor data to actionable knowledge. The high-level steps are (1) data cleaning and synchronization, (2) automated or semi-automated data annotation, context interpretation, and knowledge extraction, and (3) aggregate analysis and visualization.

Acknowledgement

The authors would like to thank MIT colleagues and the broader driving and artificial intelligence research community for their valuable feedback and discussions throughout the development and on-going operation of this study.

They would also like to thank the many vehicle owners who have provided and continue to provide valuable insights (via email or in-person discussion) about their experiences interacting with these systems. Lastly, they would like to thank the annotation teams at MIT and Touchstone Evaluations for their help in continually evolving a state-of-the-art framework for annotation and discovering new essential elements necessary for understanding human behavior in the context of advanced vehicle technologies.

Support for this work was provided by the Advanced Vehicle Technology (AVT) consortium at MIT. The views and conclusions being expressed are those of the authors, and have not been sponsored, approved, or necessarily endorsed by members of the consortium. All authors listed as affiliated with MIT contributed to the work only during their time at MIT as employees or visiting graduate students.